LMS3821-PC5 OSFP Transceiver 8x100G 2xDR4 500m

- Technology

- Fibre optic transceivers

- Partner

- Ligent

The LMS3821-PC5 is a high-performance 800 Gbit/s optical transceiver in OSFP form factor, designed for next-generation AI and HPC networking. It essentially combines two 400G DR4 transceiver engines into one module, enabling ultra-fast dual 400G links through a single OSFP slot. Using eight 100G PAM4 lanes over 1310 nm single-mode fibre, it supports reaches of up to 500 metres – ideal for connecting racks of GPU servers or spine switches within a data hall.

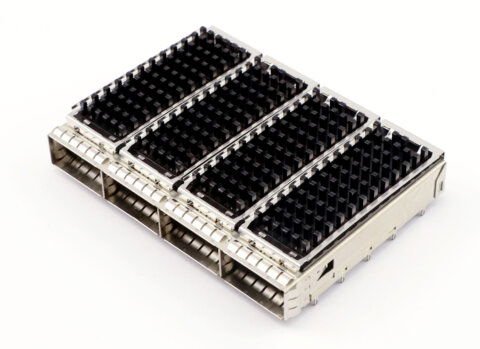

This module operates seamlessly in both InfiniBand and standard Ethernet environments, providing flexibility for deployment in specialised supercomputing fabrics as well as cutting-edge 800G Ethernet networks. It is offered in both finned heatsink and flat-top variants, ensuring compatibility with traditional air-cooled systems and modern liquid-cooled GPU clusters (for example, in NVIDIA DGX™ setups).

With its massive throughput and low-latency characteristics, the LMS3821-PC5 transceiver is purpose-built to meet the rising demands of AI training clusters, high-performance computing interconnects, and ultra-high bandwidth cloud infrastructure – all in a compact, hot-pluggable module.

Range features

A high level overview of what this range offers

- 800 Gb/s aggregate throughput – Combines two 400G links in one module, maximising bandwidth per port and reducing the number of transceivers required for high-speed links.

- Dual 400G DR4 architecture – Allows simultaneous 400G connections to multiple devices from a single module, simplifying complex HPC network topologies and increasing link density.

- 8×100G PAM4 lanes – Utilises advanced PAM4 modulation on eight optical lanes to achieve 800G in a compact form, delivering efficient high-density data transfer for demanding workloads.

- Up to 500 m reach over SMF – Supports data centre spans up to 500 metres on single-mode fibre, suitable for connecting across large halls or adjacent rooms in enterprise and campus facilities.

- InfiniBand & Ethernet compatibility – Interoperable with both low-latency InfiniBand HPC fabrics and 800G Ethernet networks, allowing flexible use in GPU clusters or standard data centre switching.

- HPC cluster optimised – Validated with leading HPC hardware (e.g. NVIDIA® ConnectX-7 NICs and BlueField-3 DPUs) to ensure reliable plug-and-play integration into AI supercomputers and GPU server racks.

- Thermal design options – Available in open-finned and flat-top (RHS) versions: the finned model suits air-cooled systems, while the flat-top variant is designed for liquid-cooled setups with external heat-sinks (e.g. DGX™ servers).

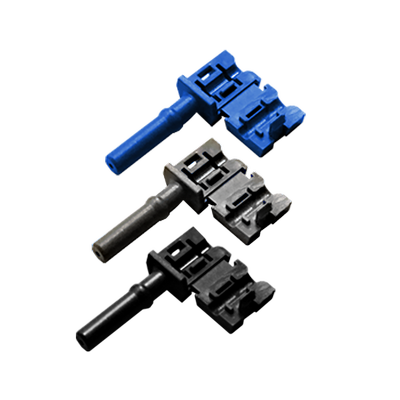

- Hot-pluggable OSFP form factor – Compliant with OSFP MSA standards and supporting CMIS management, it offers easy installation and swap-out, with Class 1 laser safety and RoHS compliance for safe, eco-friendly operation.

Downloads

for LMS3821-PC5 OSFP Transceiver 8x100G 2xDR4 500m

What’s in this range?

All the variants in the range and a comparison of what they offer

| Feature | Specification |

|---|---|

Data rate | 800 Gb/s aggregate (2 × 400 Gb/s) |

Modulation | 8 × 100G PAM4 (optical lanes) |

Wavelength | 1310 nm (single-mode) |

Fibre type | Single-mode fibre (9 µm core) |

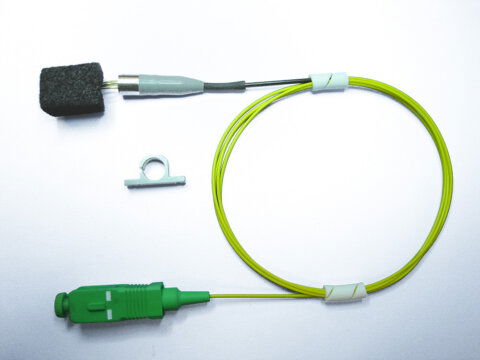

Connectors | 2 × MPO-12 (APC polish) |

Form factor | OSFP (Octal Small Form-factor Pluggable) |

Cooling variant | Flat-top design (RHS-compatible) |

Reach | Up to 500 m on SMF |

Transmit power | –2.4 to +4.0 dBm (per lane) |

Receiver sensitivity | –5.9 dBm (per lane) |

Operating temperature | 0 °C to +70 °C (standard range) |

Power consumption | ≤ 18 W (max); ~2 W in low-power mode |

Power supply | Single 3.3 V DC |

Laser source | EML lasers (Class 1 eye safe) |

Hot pluggable | Yes |

Management interface | CMIS v5.3 compliant |

Firmware security | Secure boot and upgrade supported |

Compliance | OSFP MSA specification; RoHS compliant |

FAQs

for LMS3821-PC5 OSFP Transceiver 8x100G 2xDR4 500m

"2×DR4" indicates that the transceiver contains two 400 Gb/s DR4 optical engines within a single OSFP module. In practice, it means the module can carry two separate 400G links (each link is a standard DR4 with four 100G lanes) simultaneously. This dual-400G architecture sums up to an 800 Gb/s aggregate throughput in one module, greatly increasing density compared to a single 400G transceiver.

Yes. This module supports both InfiniBand and Ethernet protocols for 800G operation. It is fully compatible with InfiniBand NDR technology (the 400G per link standard used in many GPU clusters) and can equally operate as two 400G channels in an 800G Ethernet environment. This dual compatibility means it can be deployed in HPC fabrics (using InfiniBand for ultra-low latency) or in cutting-edge Ethernet switch infrastructures without issue.

The module uses single-mode fibre (SMF) and features MPO-12 optical connectors. In fact, because it carries two 400G links, it utilises two MPO-12 APC ports on the front – one for each 400G DR4 link. Each MPO-12 connector carries 8 fibre lanes (4 transmit, 4 receive) for a 400G link. Using standard single-mode fibre (e.g. OS2), the module supports distances up to 500 metres.

Yes, with the appropriate breakout hardware. Each internal 400G DR4 link can be optically broken out into four 100G links if needed (for example, via an MPO-to-LC breakout cable or patch panel). This means one 800G OSFP transceiver could drive up to eight 100G endpoints (4×100G from each 400G group). This is useful for scenarios where you want to distribute the capacity to many nodes or when interfacing with equipment that only has 100G ports.

This transceiver comes in two cooling form factors: a standard finned-top version and a flat-top RHS version. The finned-top module has an integrated heat-sink with cooling fins and is intended for typical air-cooled deployments (standard network switches and servers with airflow). The flat-top (Riding Heat Sink) version has a smooth top with no fins – it’s designed for systems with external or liquid cooling. You would use the flat-top variant in high-density chassis like advanced GPU servers (e.g. NVIDIA DGX) where a common cold plate or liquid cooling block is pressed on top of the module for heat dissipation. In contrast, the finned version is used in conventional setups that rely on airflow to cool each transceiver individually.

The LMS3821-PC5 module consumes up to approximately 18 Watts under full load. This is a relatively high power draw (typical for an 800G optical module), so it does generate significant heat. Data centre equipment that hosts this module must be able to provide adequate cooling—either via robust airflow for the finned version or liquid cooling for the flat-top version—to maintain safe operating temperatures. The OSFP slot provides the required 3.3 V power and is designed for modules in this power range (15–20W class). When the module is not actively transmitting at full capacity, it can enter a low-power state around 2W, which helps reduce thermal load when high bandwidth isn’t needed.

The primary use case is in high-performance computing and AI clusters that demand extremely high bandwidth between nodes. For example, this module is ideal for connecting the latest GPU servers (such as systems based on NVIDIA H100 GPUs) to top-of-rack switches using InfiniBand or 800G Ethernet. It’s also used for data centre interconnect within a campus or large facility – for instance, linking one row of racks to another at 800G capacity when the distance is under 500 m. In general, any scenario that involves moving massive data volumes with low latency across relatively short distances (inside one data centre or campus) is a good fit – including distributed AI model training, supercomputer clusters, and advanced cloud infrastructure.