DLAP‑701 NVIDIA Jetson Thor compact edge AI platform

The DLAP-701 NVIDIA Jetson Thor is a cutting-edge compact edge AI platform powered by the latest NVIDIA Jetson Thor series. Designed for demanding edge AI workloads – from generative AI inference to sensor fusion, robotics, industrial automation, and autonomous systems, the DLAP-701 brings data-centre-class performance into a deployable, edge-ready form factor. With next-level compute power and broad I/O connectivity, it’s built for environments where real-time processing, privacy, and reliability are essential.

Whether you’re running large language models (LLMs), vision-language models, multi-sensor pipelines, or real-time control loops the DLAP-701 delivers the hardware foundation needed for next-gen edge AI applications.

DLAP-701 is ideal for advanced robotics engineers, AI researchers, industrial automation architects, or organisations building autonomous machines, smart infrastructure, or edge-deployed AI systems. If you need to run large AI models – language, vision, sensor fusion, control – locally, with low latency, and high reliability, this platform provides a compelling hardware foundation.

Range features

A high level overview of what this range offers

-

Massive AI compute – up to 2070 FP4 TFLOPS Powered by the Blackwell GPU inside Jetson Thor, enabling multi-model generative AI workloads and large transformer inference at the edge.

-

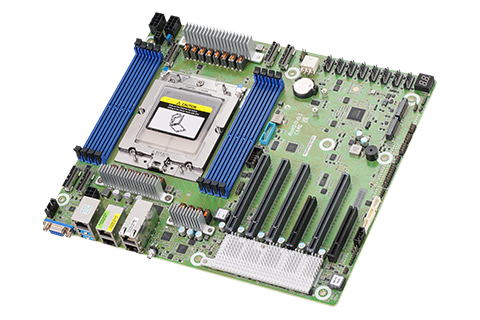

High-performance CPU & memory for balanced workloads 14-core Arm Neoverse-V3AE CPU plus 128 GB LPDDR5X memory with 273 GB/s bandwidth – ideal for memory-heavy models, sensor fusion, pre-processing, and system orchestration.

-

Real-time edge inference – Low latency & LLM deployment Run large models offline without cloud latency, ensuring data privacy and deterministic performance.

-

Multi-Task / multi-model support (MIG GPU Partitioning) The GPU supports Multi-Instance GPU (MIG) mode – enabling parallel inference tasks (e.g., vision + language + control) simultaneously on the same hardware.

-

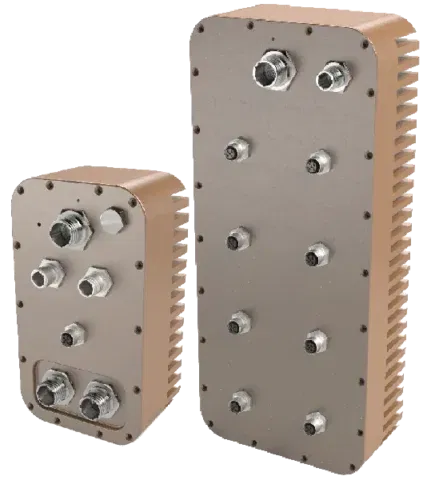

Rich, high-speed I/O & sensor integration Multiple interfaces: 5 GbE, USB 3.0, M.2 (Wi-Fi/LTE), QSFP28 (4× 25 GbE) — ideal for high-bandwidth sensor data, multi-camera rigs, LiDAR, high-speed networking, and real-time data ingestion.

-

Scalable, enterprise-grade edge deployment Compact form factor for edge or embedded deployment, with support for NVMe storage, flexible power envelope (40–130 W), and broad I/O – making it suitable for robotics, industrial automation, infrastructure, or mobile platforms.

-

Optimised for modern AI & robotics software stack Fully compatible with NVIDIA’s AI software ecosystem, enabling easy deployment of large language models, vision-language models, sensor-fusion algorithms, robotics frameworks, and more, all on edge hardware.

-

Future-Ready – multi-modal AI, robotics, and autonomous use-cases Built to handle multi-sensor inputs (cameras, LiDAR, data buses), vision + language + control pipelines, and real-time reasoning, empowering next-generation physical AI systems like robots, autonomous machines, or smart infrastructure.

Downloads

for DLAP‑701 NVIDIA Jetson Thor compact edge AI platform

What’s in this range?

All the variants in the range and a comparison of what they offer

| Specification | DLAP-701 (Jetson Thor) / Typical Setup* |

|---|---|

AI Compute Performance | Up to 2070 FP4 TFLOPS NVIDIA Developer+1 |

GPU Architecture | NVIDIA Blackwell GPU — 2560 CUDA cores, 96 5th-gen Tensor Cores, MIG support NVIDIA+1 |

CPU | 14-core Arm Neoverse-V3AE 64-bit CPU, up to 2.6 GHz NVIDIA Developer+1 |

Memory | 128 GB LPDDR5X, 256-bit, ~273 GB/s bandwidth NVIDIA+1 |

Storage | NVMe via M.2 slot / PCIe Gen5 support Electronic Design+1 |

Networking / I/O | 5 GbE, QSFP28 (4× 25 GbE), USB 3.x, M.2 (Wi-Fi / LTE), PCIe, multiple sensor-interfaces — ideal for high-bandwidth sensor fusion ADLINK Technology+2Electronic Design+2 |

Power Envelope | ~40 W to 130 W (configurable) depending on load / deployment scenario NVIDIA+1 |

Use-Case Focus | Edge AI / Inference, robotics, multi-sensor fusion, real-time LLM/VLM inference, industrial automation, autonomous systems |